What does that mean?įor your connection to the Internet you’ll have a router. The Airport Express and Airport Extreme work mainly as WIFI routers and boosters. You’ll see that not all the Time Capsules have internal drives. Apple Time Capsule Model Comparison Table From design to internal storage capacity. You’ll find below a review of the Airport Time Capsule key points. Using Your Time Capsule For Backup With Time MachineĪpple Airport Time Capsule Review Features Overview.Using Your Time Capsule To Store Your Documents On.

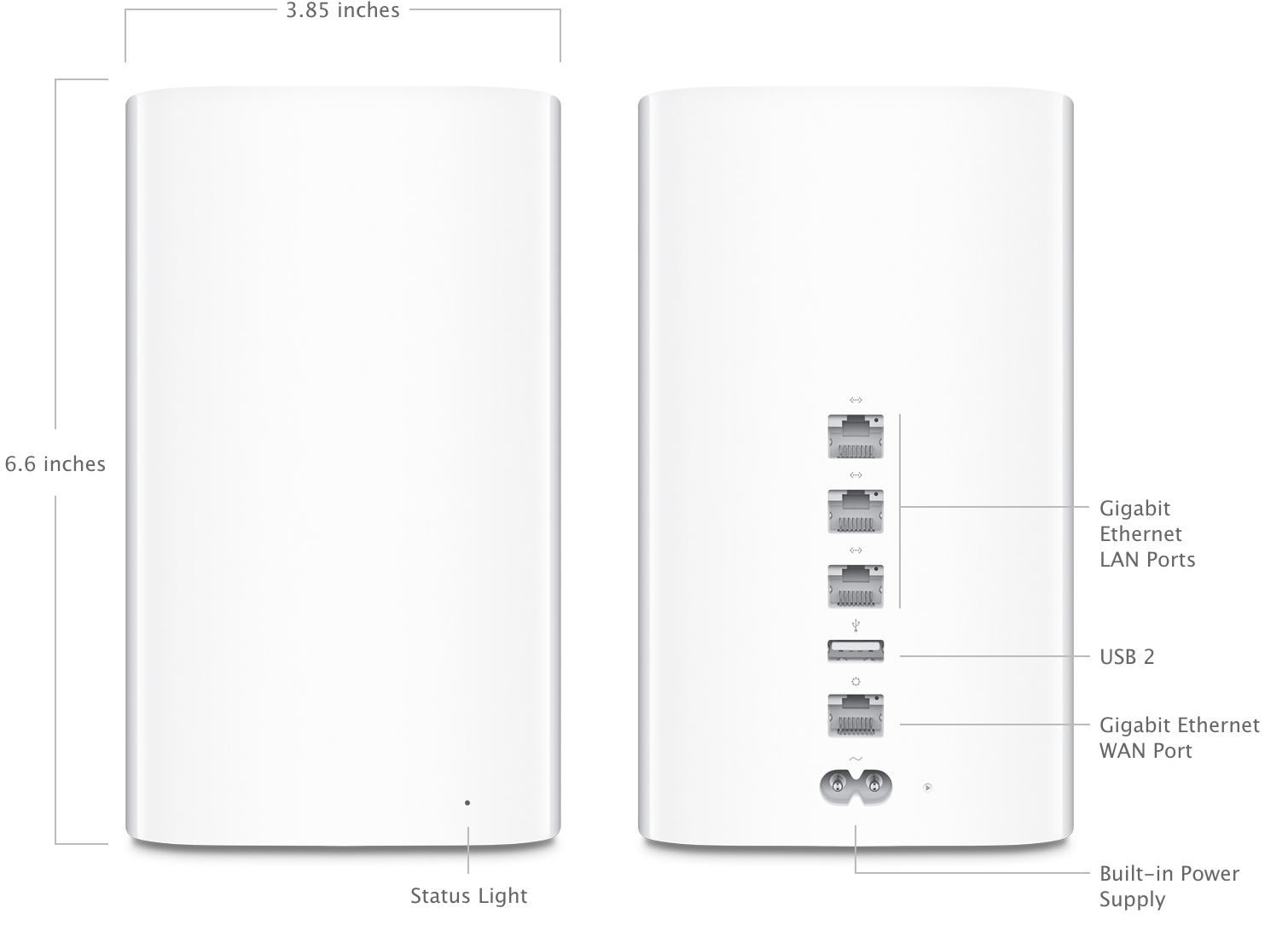

Expanding And Extending Your Wi-Fi Range.Apple Time Capsule Remote File And Printer Sharing.Airport Time Capsule For File Storage Or Backups.Can You Use Your Time Capsule As An External Storage Device?.Connecting Your Apple Time Capsule Base Station.Time Capsule's Better WIFI Speed Support For Your Apple Family.UnBoxing The Time Capsule YouTube Video.Apple Time Capsule Wireless Range And Speed.Benefit Tear Down Of The Apple Time Capsule.Apple Time Capsule Model Comparison Table.Apple Airport Time Capsule Review Features Overview.Airport Time Capsule Review 2023 Verdict.And at no extra cost to you this site earns a commission through image links, Amazon button and text links should you buy. *Disclosure: This article contains affiliate links. You’ll need to go refurbished or buy second user. And when new stocks go and you wanna buy an Apple Time Capsule. And its tie into the rest of your Apple eco system. And be grateful for its large WIFI range. Buy this 5th generation Airport Time Capsule. There are several reasons why the AirPort Time Capsule is still worth it for a Mac user today. Then this product review will help you make up your mind about whether or not it’s worth buying one. If you don’t have an Apple Airport Time Capsule yet. So, if you want to buy one of these, then you need to act fast! It’s discontinued by Apple and now you can only buy refurbished units. The Apple Airport Time Capsule is a great device.

0 Comments

It also syncs all data so that you don’t have to log in to an app that you’re already logged on on your phone.

In addition to this feature, the Android emulator also supports mouse controls so that you can aim with it and shoot quickly.Īnother functionality is that it lets you download mobile applications on your phone and push them to your desktop using the Cloud Connect feature. Since it also features touchscreen support, you can easily play any game on your detachable desktop without any hassle. Unlike other emulators, BlueStacks lets you create custom keyboard controls so that you can play any game easily. One of the best things about downloading BlueStacks App Player is that it gives you complete control over keyboard mapping. What are the features of the BlueStacks App Player? It also ensures limited lags and shuttering and can help gamers enjoy an enhanced gaming experience. According to the developers, BlueStacks App Player’s latest version is six times faster than the latest Android devices.

This means that if you’ve downloaded the application to play Android games from your Windows, you will be able to do so while also enjoying better performance than ever before. Thanks to the most recent update, BlueStacks App Player now provides users with additional features, as well as a high-end performance boost. It will also sync all data so that you don’t have to log in to each application one by one. In case you already have an Android device, BlueStacks App Player for Windows will let you control the apps on your phone directly from your desktop. BlueStacks App Player is a useful software that can help you run any application designed for Android on your Windows computer. Now storing the full original json with identity column in S3 and having all the expanded columns plus left-over json elements in native Redshift table does make sense. This will make things slow and tie up a ton of network bandwidth. I just don't see Spectrum working on this data so it will just send the entire json data to Redshift repeatedly. Spectrum does well when the compute elements in S3 can apply the first level where clauses and simple aggregations. Spectrum does not look like a good fit for this use case. Keeping the original jsons in a separate table keyed with an identity column will allow joining if some need arises but the goal will be to not need to do this. I'd consider NOT keeping the entire json in the main fact tables, only json pieces that represent the data not otherwise in columns. Re-extracting the same data repeatedly costs. I really don't expect this but if needed can be folded in easily to the existing ETL processes.Ī hazard you will face is that users will only reference the json and not the extracted columns. The ingestion load is unlikely to be a major concern but if it is then the Lambda approach is a reasonable way to extend the compute resources to address. The data size increase will be less than you think, Redshift is good a compressing columns. Json element that are rare, unique, or of little analytic interest can be kept in super columns that have just these subset parts of the json. I'd expect any data that is common for 90% of the json elements you will want to be in unique columns. The database work to expand the json at ingestion will be dwarfed by the work to repeatedly expand it for every query.Īny data that will be commonly used in a where clause, group by, partition, join condition etc will likely need to be its own column.

Any data that will be repeatedly queries in analytics will want to be its own column. You will not want to leave things as a monolithic json. Is there a 'standard' for this scenario ? Cost/maintenance of lambda/other process to transform JSON No load on cluster to ingest/process incoming data If I am converting to relational structure (2b), could simply store in S3 and utilze Redshift Spectrum to query (b) Use lambda to pre-unnest & serialize json, insert/copy directly into relational tables

Would result in some massive tables for the 'tag' nodes (a) Load as super, but then use PartiQL to unnest, serialize and store in relational tables (2) 'Traditional' Relational Tables & Types End users would have to learn PartiQL and deal with unnesting & serialization etc Maybe an additional load on cluster & performance impact to execute end user queries?

Very easy to ingest data with low load on cluster Regardless, been trying to weigh up the pros & cons of super vs. (Even if I store the full JSON as a super, I still have to serialize critical attributes into regular columns for the purposes of Distribution/Sort.) End users want to use 'traditional' SQLĪs far as I can see, my options, are to make use of the SUPER data type, or just convert everything to traditional relational tables & types.

I have to decide how to store this data, considering. Large volume of new data streaming real-time (20m/day) My source is JSON with nested arrays & structures. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed